Hello, Can you please explain this question? I have really some troubles to see why the functions are convex! Thank you for your help! :)

PSD require that the matrix has non-negative eigenvalues, which is the case in your example (2 and 0).

Hi,

thanks for your question.

note that matrix vv^T corresponds of elements

\( \begin{pmatrix} v_1^2 & v_1 v_2\\ v_1 v_2 & v_2^2 \end{pmatrix}\)

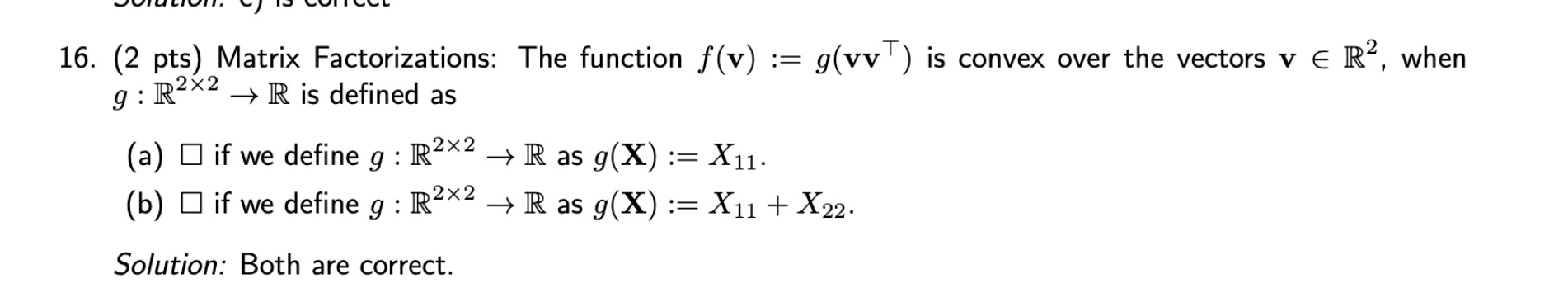

Thus the option (a) would have \(g(vv^T) = v_1^2\) and it is a convex function (e.g. its hessian is PSD)

In option (b) we have \(g(vv^T) = v_1^2+v_2^2\) and it is also a convex function as a sum of two convex functions.

Thank you for your answer. However, can you give the hessian of option (a)? Because I have that the gradient is (2*v1, 0) and the hessian is 0 everywhere except at H11 where it is 2. So then we have one eigen value that is 0 so it is not PSD.

Note that PSD is positive semi-definite, that means that eigenvalies should be non-negative, but could be zero.

(as a simple example, constant function is convex but has zero hessian)

Q16 exam 2016

Hello,

Can you please explain this question? I have really some troubles to see why the functions are convex!

Thank you for your help! :)

PSD require that the matrix has non-negative eigenvalues, which is the case in your example (2 and 0).

1

Hi,

thanks for your question.

note that matrix vv^T corresponds of elements

\( \begin{pmatrix} v_1^2 & v_1 v_2\\ v_1 v_2 & v_2^2 \end{pmatrix}\)

Thus the option (a) would have \(g(vv^T) = v_1^2\) and it is a convex function (e.g. its hessian is PSD)

In option (b) we have \(g(vv^T) = v_1^2+v_2^2\) and it is also a convex function as a sum of two convex functions.

4

Thank you for your answer.

However, can you give the hessian of option (a)? Because I have that the gradient is (2*v1, 0) and the hessian is 0 everywhere except at H11 where it is 2. So then we have one eigen value that is 0 so it is not PSD.

PSD require that the matrix has non-negative eigenvalues, which is the case in your example (2 and 0).

1

Note that PSD is positive semi-definite, that means that eigenvalies should be non-negative, but could be zero.

(as a simple example, constant function is convex but has zero hessian)

1

Add comment