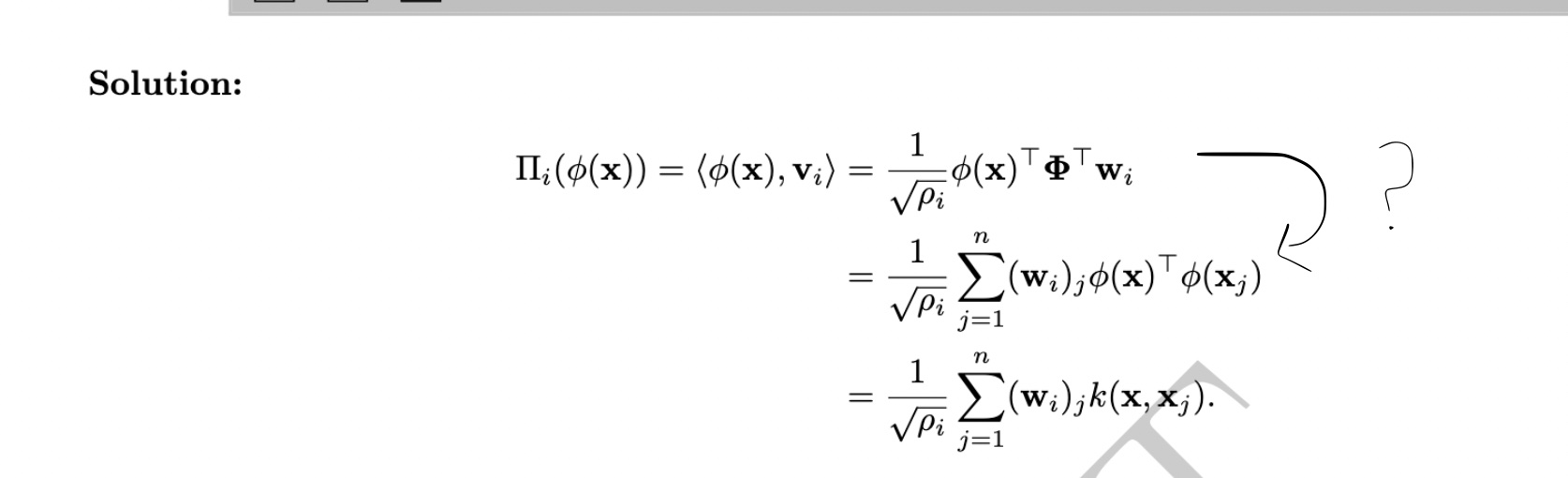

Can you give more detail of how we go from the first line to the second in the correction of Q45 please? I do not understand why we sum over n because phi(x)_T is of size 1xH and Phi_T*w_i is of size Hx1 so I would have summed over h…?

And for the Q46, why we need to find the N principal components of K? We just need wi and pi so we just need the eigen values and the eigen vectors of K. Maybe I don't understand really the definition of principal components…

Thank you for your help!

Hi, note that the sum here is taken over the sum of all the datapoints \(x_j\), whose number is exactly n.

matrix \(K\) has shape \(n\times n\) - see solution of Q42. Thus every its eigenvector has shape \(R^n\). (see defenitios of eigenvector: to be able to multiply \(K w\) shapes should match). \((w_i)_j\) corresponds to the j-th coordinate of the vector \(w_i \).

index i corresponds to one of the eigenvectors, and i varies between 1 and K (but we don't sum over index i here).

Hi, note that the sum here is taken over the sum of all the datapoints \(x_j\), whose number is exactly n.

matrix \(K\) has shape \(n\times n\) - see solution of Q42. Thus every its eigenvector has shape \(R^n\). (see defenitios of eigenvector: to be able to multiply \(K w\) shapes should match). \((w_i)_j\) corresponds to the j-th coordinate of the vector \(w_i \).

index i corresponds to one of the eigenvectors, and i varies between 1 and K (but we don't sum over index i here).

Q45 and Q46 exam 2020

Hello,

Can you give more detail of how we go from the first line to the second in the correction of Q45 please? I do not understand why we sum over n because phi(x)_T is of size 1xH and Phi_T*w_i is of size Hx1 so I would have summed over h…?

And for the Q46, why we need to find the N principal components of K? We just need wi and pi so we just need the eigen values and the eigen vectors of K. Maybe I don't understand really the definition of principal components…

Thank you for your help!

2

Hi, note that the sum here is taken over the sum of all the datapoints \(x_j\), whose number is exactly n.

matrix \(K\) has shape \(n\times n\) - see solution of Q42. Thus every its eigenvector has shape \(R^n\). (see defenitios of eigenvector: to be able to multiply \(K w\) shapes should match). \((w_i)_j\) corresponds to the j-th coordinate of the vector \(w_i \).

index i corresponds to one of the eigenvectors, and i varies between 1 and K (but we don't sum over index i here).

1

up.

Hi, note that the sum here is taken over the sum of all the datapoints \(x_j\), whose number is exactly n.

matrix \(K\) has shape \(n\times n\) - see solution of Q42. Thus every its eigenvector has shape \(R^n\). (see defenitios of eigenvector: to be able to multiply \(K w\) shapes should match). \((w_i)_j\) corresponds to the j-th coordinate of the vector \(w_i \).

index i corresponds to one of the eigenvectors, and i varies between 1 and K (but we don't sum over index i here).

1

Add comment