Sorry I did not see the lecture notes. I agree if you use the matrix \(U\) then \(S^{(k)}\) has indeed the same dimensions as X (D by N) and then the dimensions would not correspond. To make the dimensions work out you need to only keep the directions that have the k largest singular values.

Anyway, there are different of conventions of writing SVD (as long as you know what you are doing it is not a problem). Plus in practice there is no benefit in keeping zeroes if they are uninformative.

General advice : data science/ML is not pure math, it is applied math, so if things do not work out you make them.

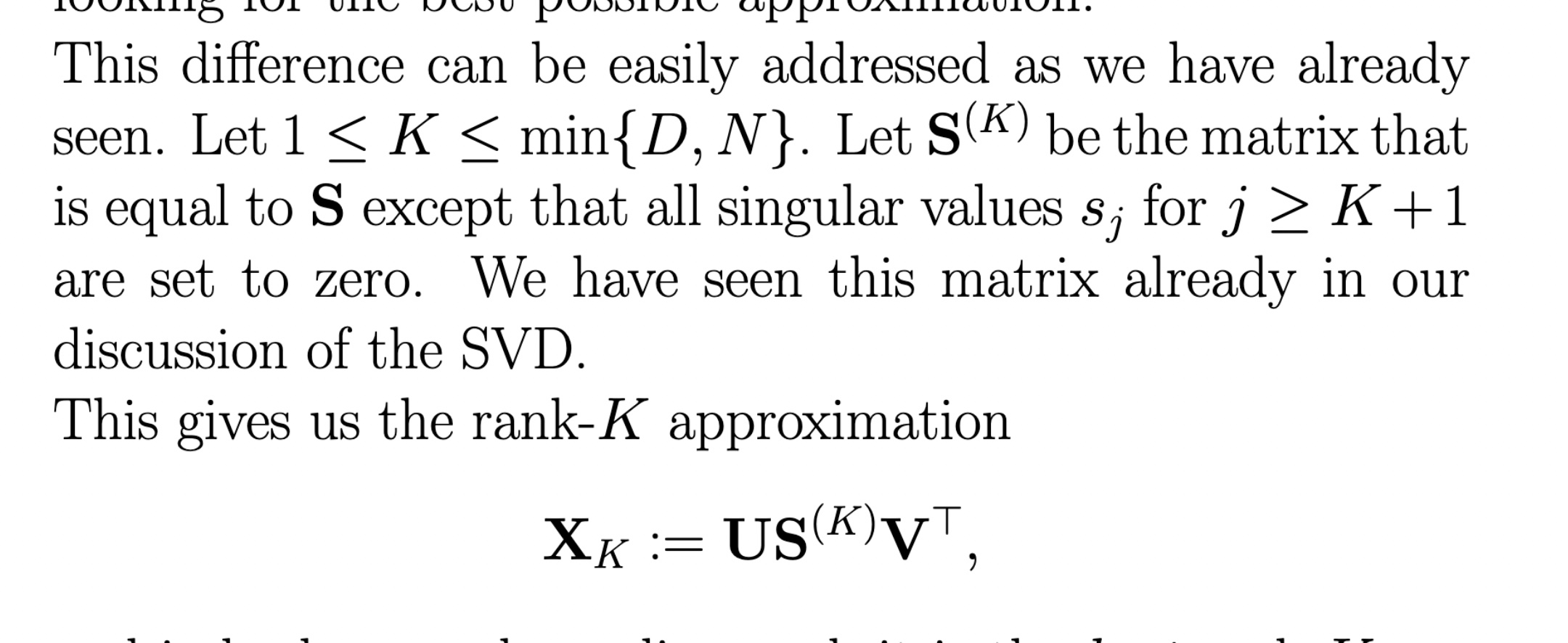

\(S^{(k)}\) would be \(K\times N\) matrix or in other conventions of writing SVD a \(K\times K\) matrix. Anyway the main point here is that we only keep the dimensions corresponding to largest singular values in both \(U\) and \(S\), so everything adds up just fine.

I do not agree ! It is written just above that S^(k) is equal to S except that some singular values are 0 but it does not affect the dimension… So unless the definition of S^(k) has changed between the two paragraphs (but it is not mentioned…), but if the definition did not change, I do not see how once S^(k) is multiplied by U and once by U_k.

Sorry I did not see the lecture notes. I agree if you use the matrix \(U\) then \(S^{(k)}\) has indeed the same dimensions as X (D by N) and then the dimensions would not correspond. To make the dimensions work out you need to only keep the directions that have the k largest singular values.

Anyway, there are different of conventions of writing SVD (as long as you know what you are doing it is not a problem). Plus in practice there is no benefit in keeping zeroes if they are uninformative.

General advice : data science/ML is not pure math, it is applied math, so if things do not work out you make them.

SVD and matrix factorization

Hello,

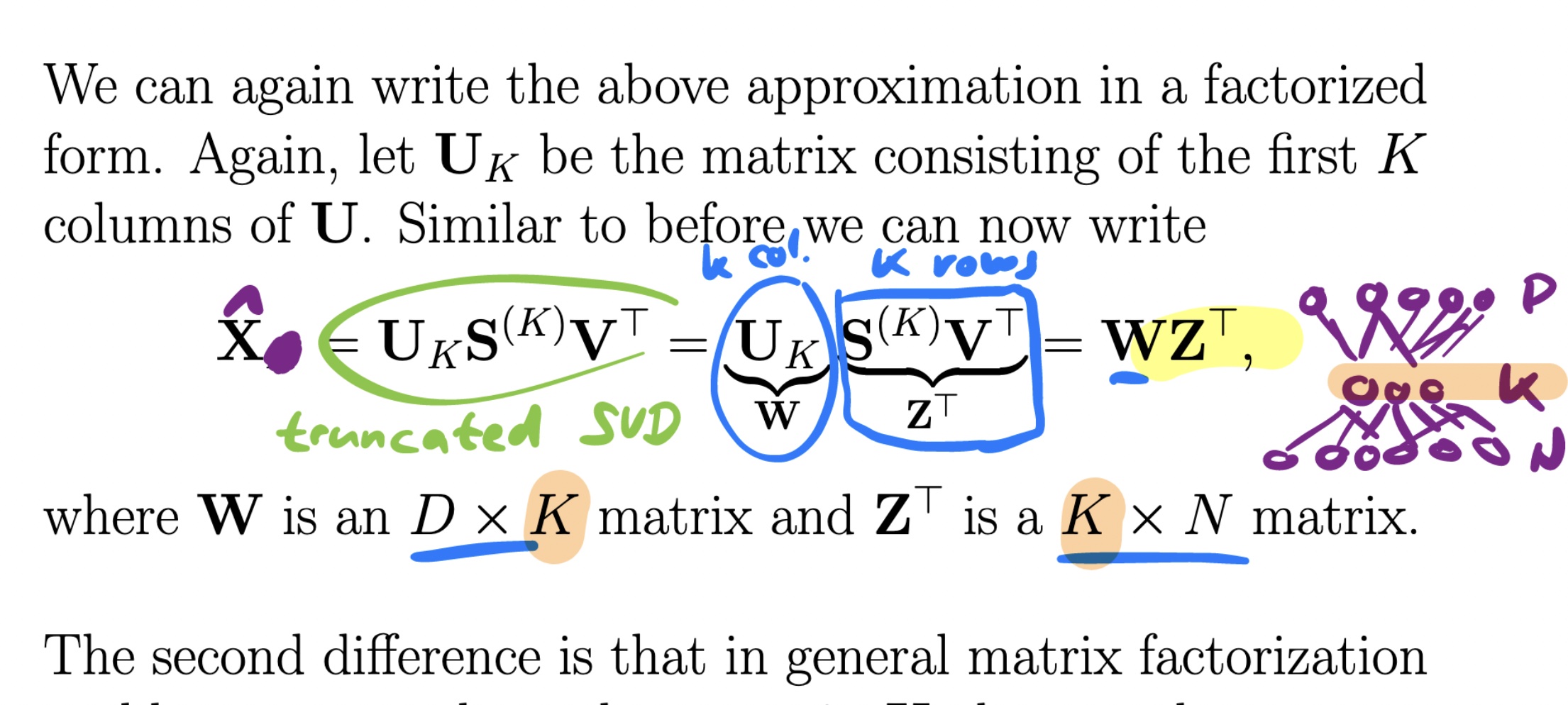

I clearly have a problem to understand how the Z_T matrix (S^(K)V_T) can be a KxN matrix as S^(K) is a DxN matrix?!!

Can you explain me please?

Thank you.

Sorry I did not see the lecture notes. I agree if you use the matrix \(U\) then \(S^{(k)}\) has indeed the same dimensions as X (D by N) and then the dimensions would not correspond. To make the dimensions work out you need to only keep the directions that have the k largest singular values.

Anyway, there are different of conventions of writing SVD (as long as you know what you are doing it is not a problem). Plus in practice there is no benefit in keeping zeroes if they are uninformative.

General advice : data science/ML is not pure math, it is applied math, so if things do not work out you make them.

1

\(S^{(k)}\) would be \(K\times N\) matrix or in other conventions of writing SVD a \(K\times K\) matrix. Anyway the main point here is that we only keep the dimensions corresponding to largest singular values in both \(U\) and \(S\), so everything adds up just fine.

I do not agree ! It is written just above that S^(k) is equal to S except that some singular values are 0 but it does not affect the dimension… So unless the definition of S^(k) has changed between the two paragraphs (but it is not mentioned…), but if the definition did not change, I do not see how once S^(k) is multiplied by U and once by U_k.

1

Sorry I did not see the lecture notes. I agree if you use the matrix \(U\) then \(S^{(k)}\) has indeed the same dimensions as X (D by N) and then the dimensions would not correspond. To make the dimensions work out you need to only keep the directions that have the k largest singular values.

Anyway, there are different of conventions of writing SVD (as long as you know what you are doing it is not a problem). Plus in practice there is no benefit in keeping zeroes if they are uninformative.

General advice : data science/ML is not pure math, it is applied math, so if things do not work out you make them.

1

Add comment