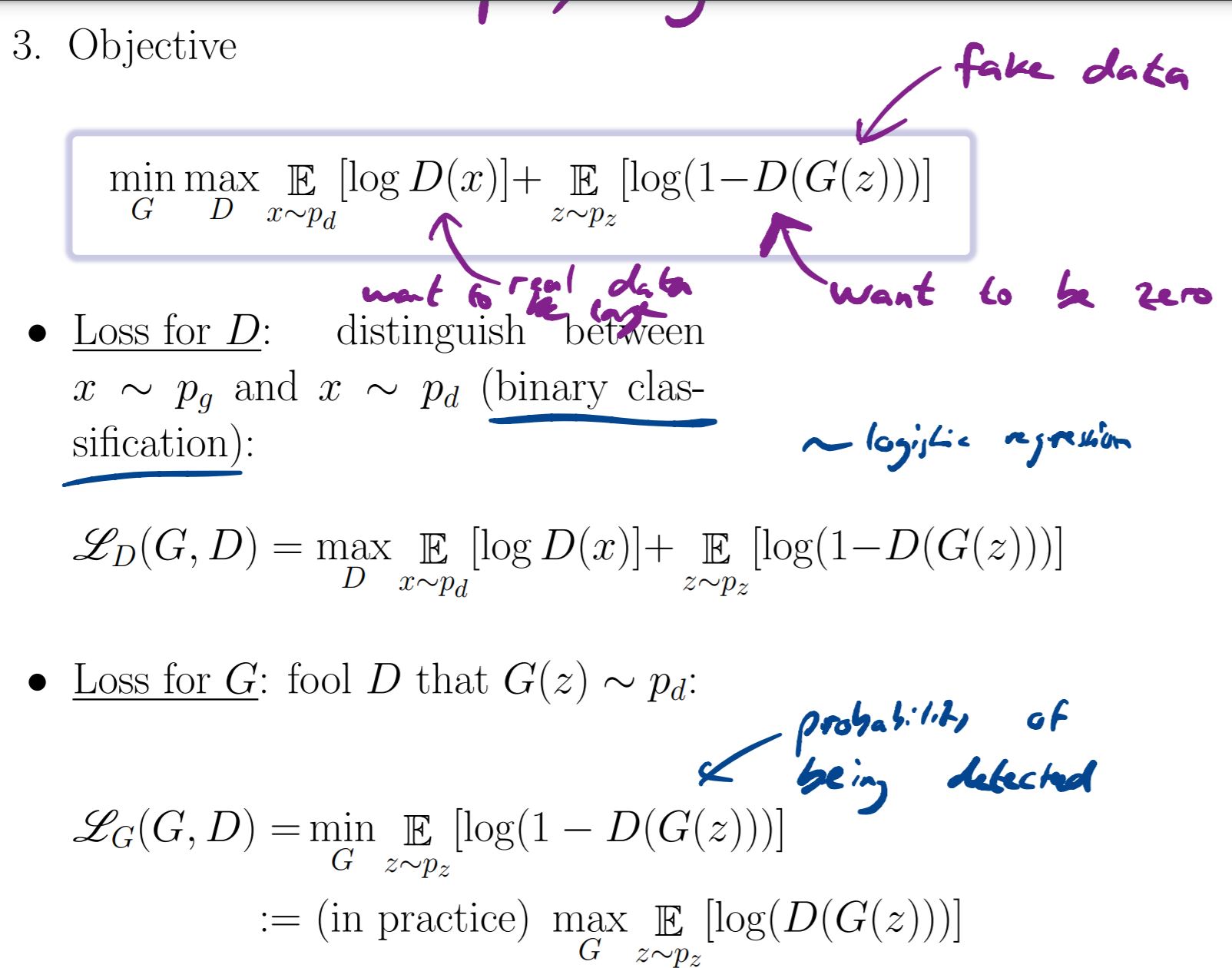

I am a bit lost trying to understand the different formulation used to describe GANS. In this first screenshot of the lecture notes:

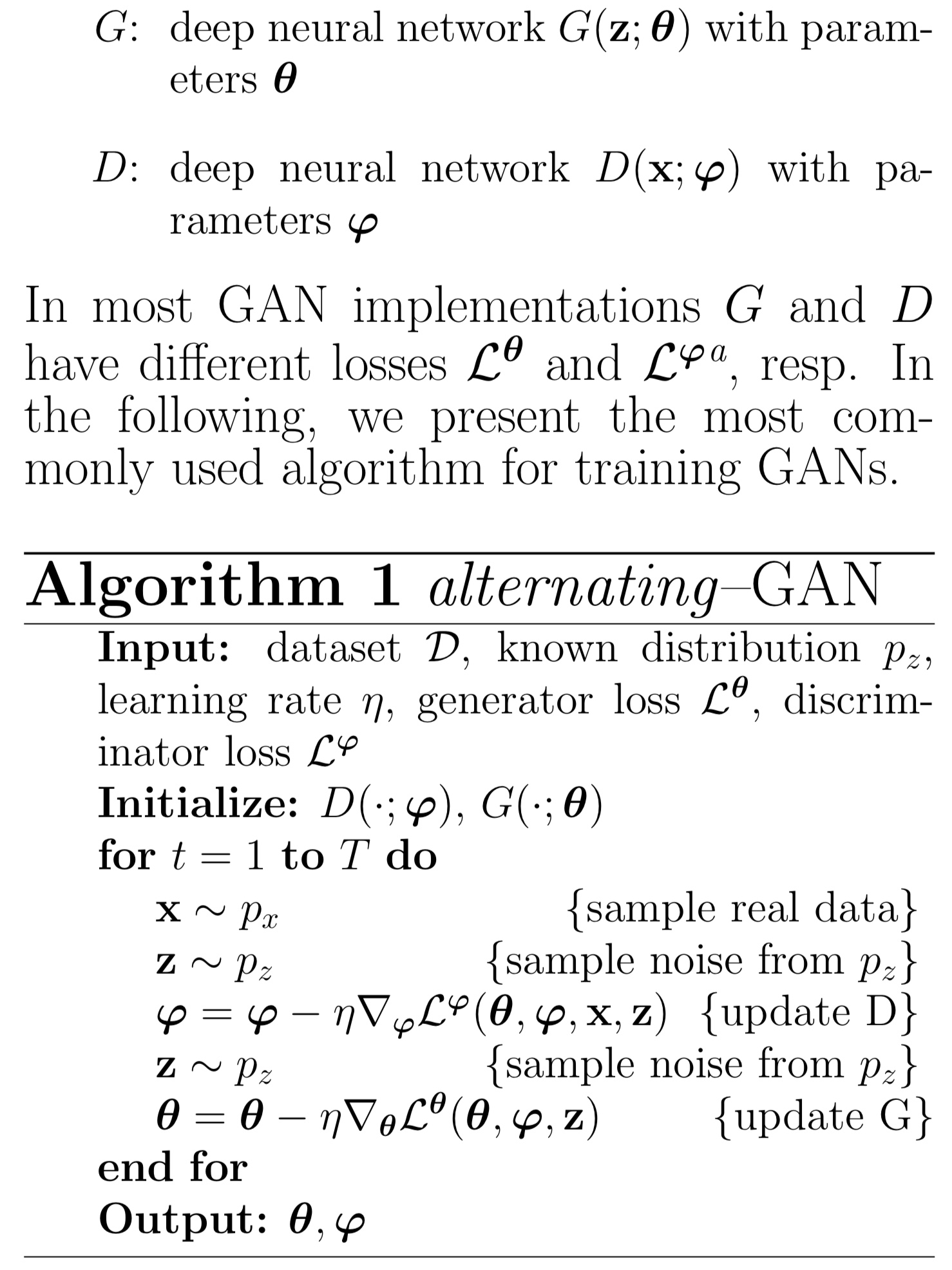

I don't understand the link between the individual loss functions and the objective. How do we obtain obective from the respective loss functions. Additionally it doesn't appear the L_theta=-L_phi as needed in zero-sum games. In the Alternating GAN algorithm there it does state that L_theta=-L_phi but then how come in the algorithm L_theta depends of x but not L_phi? and what are the loss functions used in the algorithm then?

Hi, I am not sure I understand your question well.

To obtain the loss for G, we see that in the first term of 'joint' min-max objective G does not appear (so we can ignore it as the derivative of a constant is 0).

For Alg. 1, please note that there is a typo, it should be as follows:

I understand the part about the gradient now but I still don't understand the link between the objective function and the individual losses. We have a loss for D and a loss for G but I don't understand how these are equivalent to total objective function which is min max of a combination of the two in some ways

gans loss functions

Hello,

I am a bit lost trying to understand the different formulation used to describe GANS. In this first screenshot of the lecture notes:

I don't understand the link between the individual loss functions and the objective. How do we obtain obective from the respective loss functions. Additionally it doesn't appear the L_theta=-L_phi as needed in zero-sum games. In the Alternating GAN algorithm there it does state that L_theta=-L_phi but then how come in the algorithm L_theta depends of x but not L_phi? and what are the loss functions used in the algorithm then?

Thank you very much for your help

Hi, I am not sure I understand your question well.

To obtain the loss for G, we see that in the first term of 'joint' min-max objective G does not appear (so we can ignore it as the derivative of a constant is 0).

For Alg. 1, please note that there is a typo, it should be as follows:

Please let us know if it's unclear

Thank you for your response,

I understand the part about the gradient now but I still don't understand the link between the objective function and the individual losses. We have a loss for D and a loss for G but I don't understand how these are equivalent to total objective function which is min max of a combination of the two in some ways

thanks again

Add comment