Problem 16 exams 2018 :

Can you explain to me please why the first and last answers are not correct.

I thought that Glove could be interpreted as a matrix factorization problem and that such a problem can be solved using SVD (as long as the matrix is complete).

For the last answer, I thought that the bag of word increase (generally) the dimension of the data and that logistic regression complexity depends on this dimension D.

GloVe

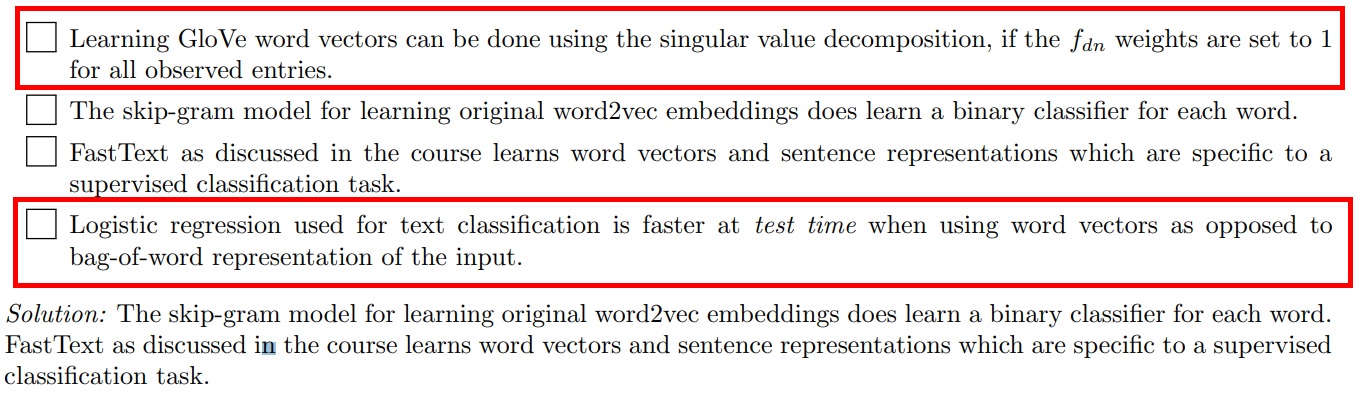

In Week 13 part 2 minute 23:51, professor Jaggi mentions that we could learn word vectors by filling the missing entries with zeros and then doing SVD. However, these word vectors are not GloVe word vectors (and don't perform as well).

The biggest difference is that in GloVe we don't use Xdn we use the log(#co-occurence (wd,wn))

Test time

I think "bag of word increase (generally) the dimension of the data and that logistic regression complexity depends on this dimension D" is true for training time but does not really matter at testing time

agreed. adding that

1) is wrong because glove crucially needs that the unobserved entries are not counted (similarly, the standard recommender system also can not be obtained with SVD)

4) is wrong since at test time, logistic regression of a bag-of-words input vector (which is very sparse, having only one or a few non-zeros) is much faster than of a dense input such as a word vector. also lookup of word vectors will take time

Questions on Glove

Problem 16 exams 2018 :

Can you explain to me please why the first and last answers are not correct.

I thought that Glove could be interpreted as a matrix factorization problem and that such a problem can be solved using SVD (as long as the matrix is complete).

For the last answer, I thought that the bag of word increase (generally) the dimension of the data and that logistic regression complexity depends on this dimension D.

Thank you in advance.

TAs correct me if I am wrong:

In Week 13 part 2 minute 23:51, professor Jaggi mentions that we could learn word vectors by filling the missing entries with zeros and then doing SVD. However, these word vectors are not GloVe word vectors (and don't perform as well).

I think "bag of word increase (generally) the dimension of the data and that logistic regression complexity depends on this dimension D" is true for training time but does not really matter at testing time

3

agreed. adding that

1) is wrong because glove crucially needs that the unobserved entries are not counted (similarly, the standard recommender system also can not be obtained with SVD)

4) is wrong since at test time, logistic regression of a bag-of-words input vector (which is very sparse, having only one or a few non-zeros) is much faster than of a dense input such as a word vector. also lookup of word vectors will take time

5

as a follow up question: I was wondering how exactly does the lookup of word vector work?

Add comment